Guest Speaker: Bryan Kramer “Why we can’t rely on technology for a better future”

A tech revolution is coming. Our future depends on how we decide to use it.

Technology has never before played such a large role in our lives. So far, that role has mostly been positive — largely thanks to advances in technology, we’ve never been more prosperous, there have never been more of us, and we’ve never been more at peace.

But the mistaken idea that technology can be relied on to solve all of our problems on its own has become more and more common thanks to these trends. The question is: will those trends continue to hold, or is it just a coincidence that technological advancement has correlated with our well-being?

The idea that technology might be more trouble than it’s worth, or that it may have catastrophic consequences down the line, is nothing new. It’s a widespread theme in post-apocalyptic and dystopian science fiction, genres which dominates sales both in the bookstore and at the box office (The Hunger Games, Maze Runner, Terminator and Divergent series are just a few examples from last year). It’s also a favorite theme of fringe ideologies, from radical environmentalists to religious fundamentalists.

But mainstream culture, despite being inundated with dystopian scifi franchises, still sees tech as its starring protagonist. How people use their time and money shows this: they spend their limited resources on what they value most. Three of the top five most valuable companies on earth are tech companies. The majority of people spend almost their entire waking life with tech: data from last year showed that Americans use electronic media for more than 11 hours a day on average.

When almost everything you do on a daily basis involves tech, you’re far more inclined to hero worship than criticism. And since the most common sources of tech alarmism are either blockbuster franchises or paranoids toting protest signs, anxiety over tech’s role in our future can seem about as rational as worrying about aliens or magic.

So the idea that tech might be doing more harm than good is easy to dismiss. Meanwhile, both because it’s been advancing so quickly and because we get so much value from it in our daily lives, tech’s capacity to solve our problems can seem infinite.

But limits to what tech can do for us do exist. These limits stem from the very nature of technology and how it relates to us as human beings, so they won’t go away as tech gets more advanced. And unless we start to seriously acknowledge and understand them, the consequences could be dire.

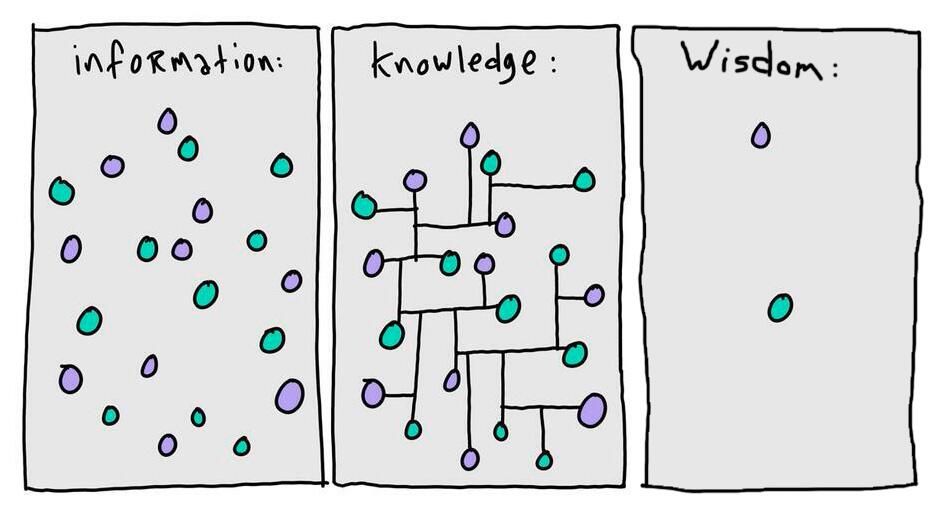

The difference between knowledge and wisdom

There’s an old myth about how humans first got the use of fire — perhaps you’ve heard it before. The story goes that the titan Prometheus stole fire from the gods and gave it to mankind after taking pity on their weak and defenseless bodies. This angered Zeus, who chained Prometheus to a rock where he had his liver torn out every day by an eagle (until eventually Hercules came along and saved him).

The reason why Zeus punished Prometheus so harshly (as Plato tells it) is that fire actually ended up harming humans more than it helped them. Fire represented technological knowledge, which gave mankind power over its surroundings — the power to, for example, make it warmer and brighter at night. The problem arose from what Prometheus didn’t steal , the part of knowledge he couldn’t get his hands on: self-knowledge (i.e. wisdom) which would have given humanity mastery over itself.

Handing mankind knowledge without wisdom was like giving kids matches to play with. They started destroying themselves, and Zeus had to intervene before they burned the house down; he eventually sent the rampaging humans the wisdom they lacked, restoring order. But the damage had already been done, and Prometheus had to pay the price.

The point here is that, while technology makes it possible to do much more than we could without it, it can’t help us decide what to do. So far, we’ve been wise enough not to make any really bad choices, but what if, in the future, technology outpaces our self-mastery? What if, like the mythical humans in the Prometheus story, we become the recipients of divine fire without the wisdom needed to use it responsibly?

Some would argue that the events of the 20th century answered this question already. The obvious example is the invention of nuclear weapons: fire (technically nuclear fusion, not combustion) at its most destructive. The first detonation of an atomic bomb took place in July 1945, and a mere 17 years later humanity nearly annihilated itself during the Cuban Missile Crisis. This was a near miss, and it easily could have happened. Others might point to the degradation of the environment or the existence of biological weapons as clear evidence that humanity is using its new powers unwisely.

But we’ve taken steps to acknowledge and address these dangers. We designed International laws like the Non-Proliferation Treaty (which was signed by almost every UN member state) to contain the spread of destructive weaponry. The recent Paris Agreement was a serious step forward in addressing climate change.

Some critics of these measures claim they are too ineffectual, but they represent sincere efforts with broad international support that argue for humanity’s collective ability to self-govern. To date, we’ve manage to avoid any technologically induced cataclysms, although our ability to destroy ourselves and our planet has never been greater.

So it’s hard to argue that, so far , technology hasn’t had a net positive impact on our species. We’ve been able to use it wisely enough to raise standards of living around the world. Some of our most dangerous new weapons (like nuclear ICBMs), it’s often said, deterred any major wars from occurring over the last seventy years. During the Cold War, “mutually assured destruction” averted any serious confrontation between the only two powers left standing after World War II. And since the collapse of the Soviet Union, no two nuclear powers have gone to war with one another.

But it’s important to consider whether things are likely to remain this way, or whether instead human knowledge will finally outpace humankind’s collective self-mastery.

On the one hand, that self-mastery seems to be weakening — it certainly hasn’t gotten noticeably stronger in the recent past. Whether or not you believe that our civilization is decadent and in decline, no one who pays attention to the news could possibly think that the world today is racing towards a place of greater wisdom.

On the other hand, this is exactly what’s happening with technology — our tech is racing ahead at an exponential rate of progress. As a matter a fact, there is growing evidence that we’re on the verge of a revolutionary new period when it comes to emerging technologies.

How revolutionary new technologies are on the verge of changing everything

Silicon Valley Investors source 15 minute news

Silicon Valley Investors source 15 minute news

I recently moved to Silicon Valley, which is the site of the most vibrant and pioneering tech community on the face of the planet. Nowhere else are you likely to find such widespread optimism about the future, an optimism driven by an unwavering faith in technology’s power to make the world better.

Not long after getting here I had the good fortune to catch a talk by Vivek Wadhwa of Singularity University which encapsulated this mindset at its most inspiring. This talk prophesied that revolutionary technological change will soon disrupt every major industry and catapult the global economy into a prosperous new age. As Wadhwa has written,

“the United States stands on the cusp of a dramatic revival and rejuvenation, propelled by an amazing wave of technological innovation. A slew of breakthroughs will deliver the enormous productivity gains and the societal dramatic cost savings needed to sustain economic growth and prosperity. These breakthroughs, mostly digital in nature, will complete the shift begun by the Internet away to** a new era where the precepts of Moore’s Law can be applied to virtually any field.**”

The key point here is that (according to Wadhwa) we’re about to see the exponential growth we’ve witnessed in computer processing power, as described by Moore’s Law, brought to bear across all industries and fields of knowledge. One of the more exciting implications include his prediction in arecent article that renewable energy will be practically free in as few as 15 years, and he predicts comparable transformations in everything from medicine to manufacturing.

Mr. Wadhwa, Ray Kurzweil and the folks over at Singularity University aren’t alone in their conviction that humanity is on the verge of exponential technological change. The theme of the World Economic Forum’s meeting last week in Davos was the “Fourth Industrial Revolution” , a term put forward by a recent book of the same title written by the WEF’s executive chairman Dr. Klaus Schwab.

In his book, Schwab echoes Wadhwa’s prediction that tech will prove disruptive across many industries, writing that the coming revolution “entails nothing less than a transformation of humankind” which will emerge from “the staggering confluence of emerging technology breakthroughs, covering wide-ranging fields such as artificial intelligence, robotics, the internet of things, autonomous vehicles, 3D printing, nanotechnology, biotechnology, materials science, energy storage and quantum computing”. The thing to note here is how many transformative technologies Schwab lists, compared to the single breakthrough that led to the original Industrial Revolution (harnessing steam power) — and just think how much that changed things!

Other reputable institutions are making similar predictions. UBS recently published a white paper for Davos which came to the same conclusion as Schwab, predicting that the so-called Fourth Industrial Revolution will be “comparable in magnitude to the advent of the first industrial revolution, the development of assembly line production, or the invention of the micro-chip”. Their report emphasizes that AI and robotics will drive much of this change by automating increasingly complex tasks across industries, but agrees that the consequences will be significant and wide-reaching.

So, although the timetable of these changes is a subject of hot debate, there’s a general consensus by the experts that their impact will be transformative. But it is far less certain whether that transformation will be for the better, and some distressing indications that our technology may finally outpace our self-mastery, for good. Even the optimists recognize this frightening prospect: Wadhwa recently acknowledged in a tweet that he worried about the dark side of technology. Schwab’s concerns are even more dire, writing that “from the perspective of human history, there has never been a time of greater promise or potential peril.”

To put it another way, we really are playing with fire.

Technology is weakening our power over ourselves

It’s disquieting enough that our maturity as a species is being outpaced by how big and destructive our toys are getting. But now there’s evidence that our toys might actually be making us even more immature. We’re becoming more likely, in other words, to burn the house down even if the matches we’re playing with don’t turn into a blow torch (which they almost certainly will).

One worrying example is that tech may be having a negative effect on cognition. This might seem counter-intuitive in the age of the search engine and the online encyclopedia, but as it turns out, the internet is wreaking havoc on our ability to think clearly and retain information. The author Nicholas Carr points out that “when we go online, we enter an environment that promotes cursory reading, hurried and distracted thinking, and superficial learning. Even as the Internet grants us easy access to vast amounts of information, it is turning us into shallower thinkers, literally changing the structure of our brain.”

This picture is borne out by the data. A recent study found that Millennials aged 18 to 34, people who spent their formative years online, were worse than people aged 55 and over at remembering what day it is, where they put their keys, or whether or not they had showered. So even though our access to information has never been greater, our ability to retain and apply that information is declining — a trend so pronounced that even Google’s Eric Schmidt has expressed worry that “the overwhelming rapidity of information… is, in fact, affecting cognition”. In a future that will involve outsourcing more and more cognitive tasks to AI, how much more pronounced will this trend become? And what role can a stupefied humanity expect to play in that future?

Even more worrying is the fact that increasing utilization of AI may be slowly destroying jobs — permanently. The correlation of hard work and merit with income in a free market economy has always been viewed as the great benefit of capitalism, and unequal distribution of wealth is tolerated as a necessary cost of those benefits. But what if the total number of available jobs begins to shrink, rather than grow, as economic productivity increases? Although unprecedented, there’s growing evidence that this is exactly what’s starting to happen.

MIT’s Eric Brynjolfsson and his colleague Andrew McAfee have argued at length that we’re now witnessing a “decoupling” of productivity and employment, and that technology has begun to destroy more jobs than it has created since the year 2000. “It’s one of the dirty secrets of economics” says Brynjolfsson, that “technology progress does grow the economy and create wealth, but there is no economic law that says everyone will benefit”.

Automation, the use of machines instead of humans for a task, is the a major cause of this decoupling: one report claims that almost half of American jobs will be automated within the next two decades. But even now a substantial portion of what employees are paid to do on a daily basis could be automated. Research released by McKinsey last year found that fully 45% of activities that workers are paid to perform, even amongst some of the highest paid occupations, can be automated by adapting technologies that already exist.

Some argue that new industries and jobs will be created to replace those lost to automation, as happened during last century’s transition from a manufacturing to a service economy in the US. But if, thanks to AI, thought itself at increasing levels of complexity can be automated, it seems very possible that we aren’t undergoing a transition to a new economy but rather a transformation to a new kind of economy.

This is why Brynjolfsson thinks that technology isn’t just contributing to increasing inequality, but might in fact be the main cause. Dr. Klaus Schwab points out, for example, that the top three companies in Chicago in 1990 had roughly the same revenue (though only valued at a fraction of the market capitalization) as the top three Silicon Valley companies in 2014, but with only one tenth the number of employees. a clear contrast.

Economic inequality leads to concentration of wealth, and in turn will lead to a concentration of power within our civilization. Modern states (mostly) adopted the liberal democratic model of government precisely to break up that kind of concentrated power , and doing so made it one of the biggest advances in our political wisdom in the last 300 years.

Liberal democracies are designed to disperse power amongst the courts, the constitution, the laws, the state, and the people. The reason this model was such a big step forward — and such a hit with the rest of the world after it debuted in the American and French revolutions — is that dispersed power prevents abuse and corruption, whereas concentrated power promotes them.

Greater equality also means less social unrest. If more than half of all jobs simply disappear in the next twenty years, there will be an immense backlash and massive political instability as a result. The trend toward inequality (which will only accelerate along with progress in tech) is therefore deeply troubling, even if you are lucky enough not to be on the losing side of it.

We need a new Renaissance

In the Prometheus myth, the hapless titan finds out to his dismay how wrong he was to think technology was the same thing as progress when he gave humans knowledge without wisdom. Today, as our own knowledge races ahead and wisdom appears to be an increasingly scarce resource, we can no longer afford the complacent belief that we can rely on technology to bring us a bright new future and solve all of our problems.

I’m not saying that we should try to halt the forward march of technology (we couldn’t do it even if we tried). But one thing is very clear: as we sail into the stormy waters that the coming tech revolution will bring, we’ll need a cultural revolution just as drastic to navigate that storm safely.

Seneca once observed of humanity that “our plans go wrong because they have no aim; if you don’t know what port you’re making for, no wind is favorable.” The tempestuous winds of technological change are blowing at our backs, propelling us forward into the future at great speed. But it remains unclear where we are headed.